Why context engineering matters in private equity

Martin Pomeroy

By Martin Pomeroy, Tech Co-Founder, Deal Engine

Across private equity, firms are racing to deploy large language models against their data ecosystems. CRMs, data rooms, portfolio data, third-party datasets; everything is being fed into LLMs with the hope that insight will emerge on the other side.

In many cases, it doesn’t. Or worse, the output looks plausible but doesn’t meaningfully improve decision-making. The result is frustration, skepticism, and an unintended outcome: doubt about whether AI is actually fit for purpose at the firm level. The problem isn’t the models themselves, it’s the lack of context.

The LLM isn’t the starting point

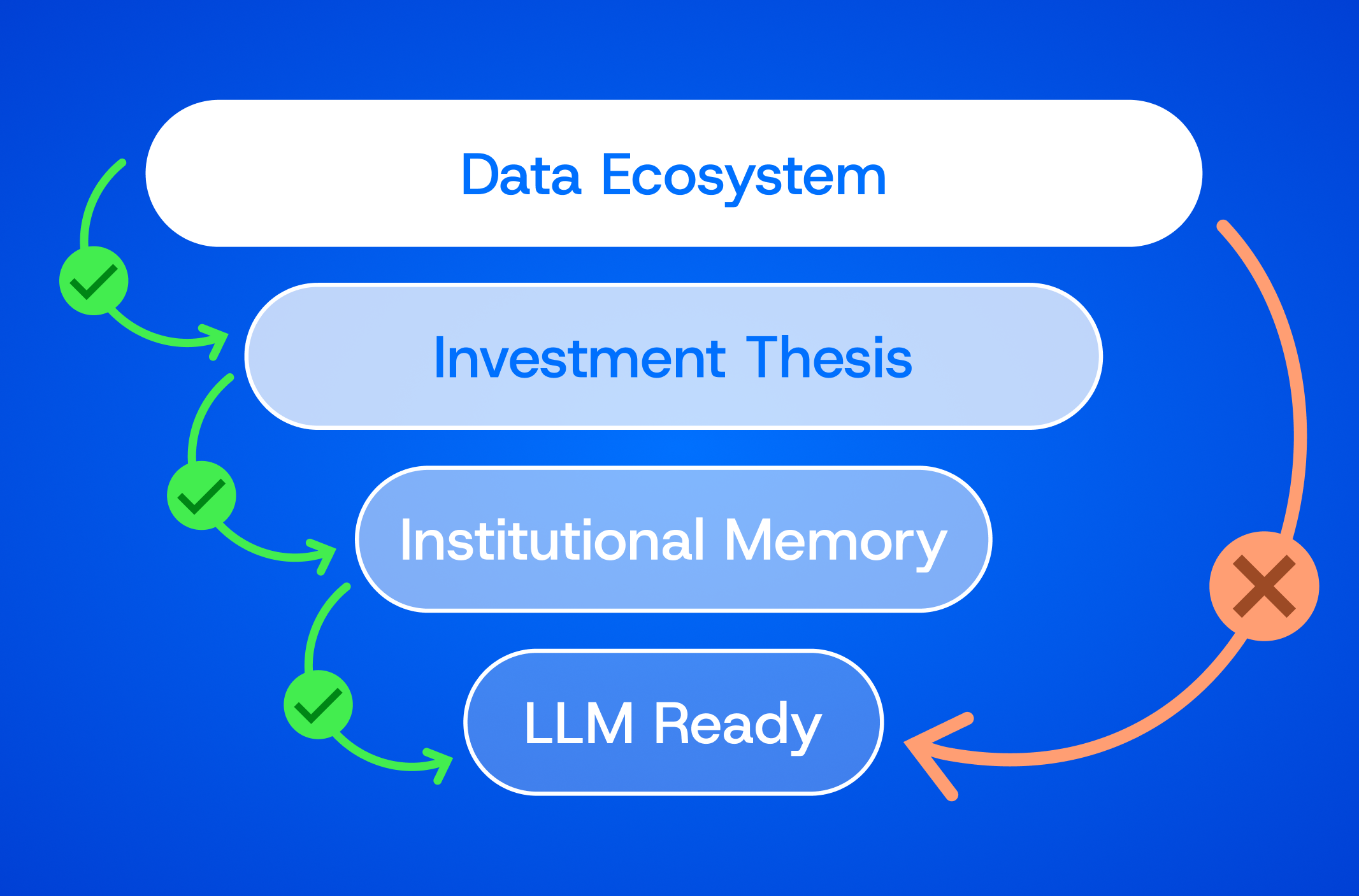

One of the most common mistakes we see is treating the LLM as the first step in the process rather than the last. Firms ask, “What can this model tell us?” before asking the more important question: What data should it be looking at in the first place?

Without disciplined pre-processing, even the best models will struggle. They’ll attempt to reconcile stale information with current signals, weigh irrelevant data alongside high-confidence inputs, and surface results that feel noisy or contradictory. That’s not an intelligence failure, it’s a systems failure.

The right approach is to be deliberate about what data is passed to the model, how it’s framed, and what should be excluded entirely. This is where context engineering becomes critical, and where partners like Deal Engine play a role in helping firms operationalize it correctly.

Start with the investment thesis

Context engineering begins with clarity around the investment thesis.

An LLM should not be searching “the universe of companies.” It should be searching your universe - defined by sector focus, size, geography, ownership structure, growth profile, and other thesis-driven constraints. That thesis provides the lens through which everything else is evaluated.

Once that lens is defined, firms can establish a manual data priority system: agreed-upon rules that determine which data sources carry the most weight, which signals are disqualifying, and how classifiers and scoring models should behave. An agent without memory and context is just a text generator - this structure allows AI to operate with intent rather than ambiguity.

Encoding institutional memory

Another overlooked aspect of context is institutional knowledge.

If a company has already been reviewed and marked non-investable in the CRM, that matters. If it was evaluated recently, resurfacing it again adds little value. Context engineering encodes these realities directly into the workflow: don’t show me what we’ve already seen, don’t revisit decisions without new signal, respect prior conclusions unless conditions change.

Without this layer, LLMs will happily rediscover the same companies over and over again, technically “new,” but operationally useless.

Rubbish in, rubbish out…at the token level

All of this comes back to a simple principle: rubbish in, rubbish out.

LLMs reason over tokens. If you overload them with loosely relevant data, conflicting timeframes, or redundant information, you increase the likelihood of confusion. Controlling tokens isn’t just about efficiency or cost, it’s about analytical clarity.

Context engineering forces discipline. It ensures that only the most relevant, highest-confidence information is passed to the model, in a structure it can reason over effectively. The goal is not more data, but better data.

Practical use cases: from noise to signal

One practical example is generating LLM-friendly company profiles. Instead of asking a model to synthesize raw data from dozens of sources, firms can provide a normalized, thesis-aligned profile that captures what actually matters for an initial investment decision.

This is especially important when searching for net-new opportunities. Deal sourcing is inherently a haystack problem - but without context, an LLM will surface a lot of useless needles. Context engineering dramatically narrows the search space so that “net new” actually means new and relevant.

Predictability that enables trust and accelerates user adoption

When context is properly engineered, AI becomes predictable. The same inputs produce consistent outputs. The process can be repeated, audited, and trusted.

Ultimately, that’s what deal teams want: all the relevant information at a glance, presented clearly enough to make a confident yes-or-no decision. Not a black box, not a novelty, but a reliable system.

The future of AI in private equity won’t be defined by who uses the biggest model. It will be defined by who asks and answers the right question first: what data are we passing to the model?

To learn more about how Deal Engine has consulted firms on this very conundrum for over a decade, get in touch.

Get your demo now to see how this works in practice.

Liked this perspective? Take it further

Deal Engine builds on the ideas you've read; unifying data, streamlining deal flow, sharpening judgment.

Related Posts

Be first to every deal.

See Deal Engine in action.

Discover how Deal Engine is providing private equity firms with the data engineering and AI capabilities fueling their competitive advantage.