Unify your data, unleash your firm's AI

A purpose-built dealmaking platform for private equity that plugs into your tech stack, unifying market and proprietary data to enable AI agents to quickly source deals and provide context for each investment thesis.

A selection of our clients

"Market context integration is key to bringing Agentic AI to life."

"We’ve decided to deploy AI for process automation of tasks in the back office, document classification, and website scraping via Deal Engine to identify companies in niche sectors.”

Rory Cooke

Senior Data Analyst

“By pairing deliberate data engineering with effective AI agents, designed to source deals matching each investment thesis, firms now have a platform that evolves with their strategy—flexible, white-labelled, and fully equipped for the next decade of innovation.”

Phil Westcott

CEO, Deal Engine

The firm's data ecosystem, in one intelligent engine.

Integrate all internal and external data sources into a living, learning data engine built to optimize dealmaking and drive sustained competitive advantage for your firm.

Helping tech leadership get data in the fast lane

Our purpose-built data engine pulls together your entire market and proprietary data ecosystem, fueling your AI roadmap.

Agentic AI for PE, from origination to value creation

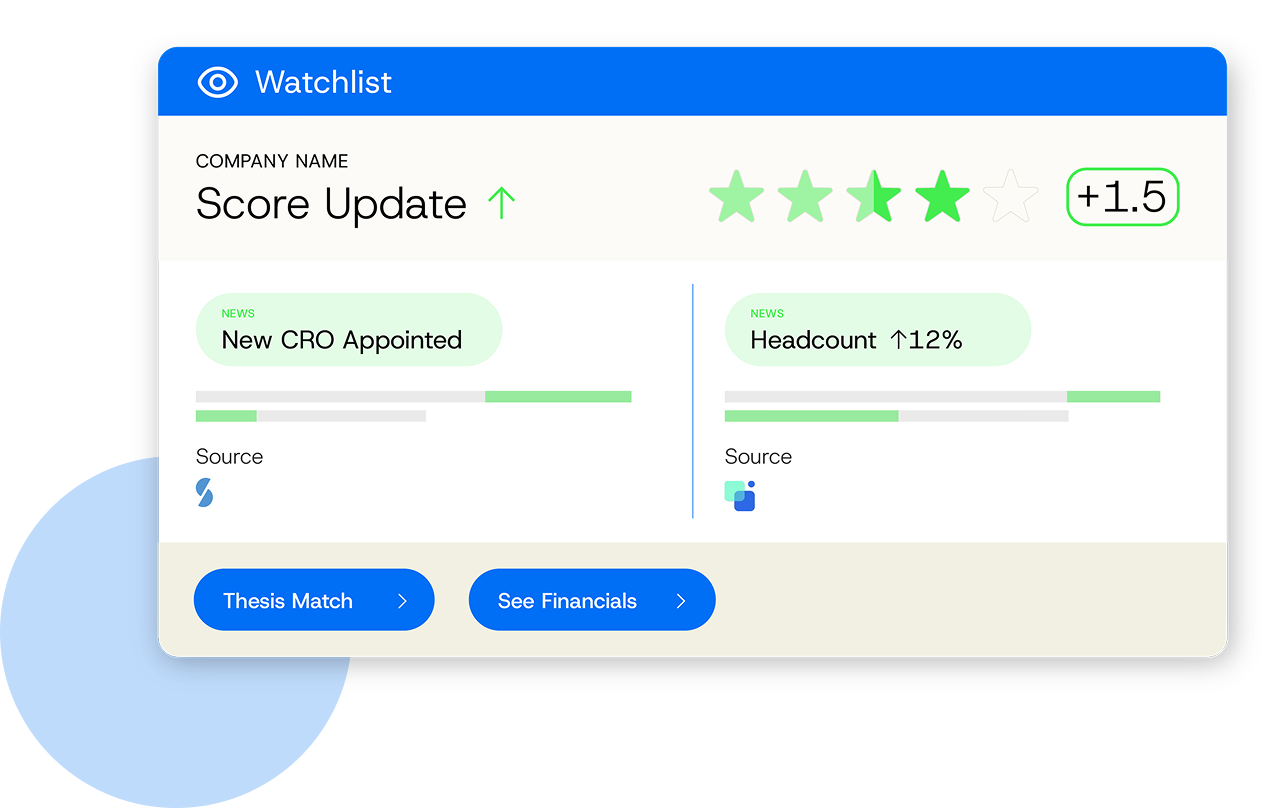

Empower origination teams with codified scoring, net-new recommendations, watchlists and tracked deals.

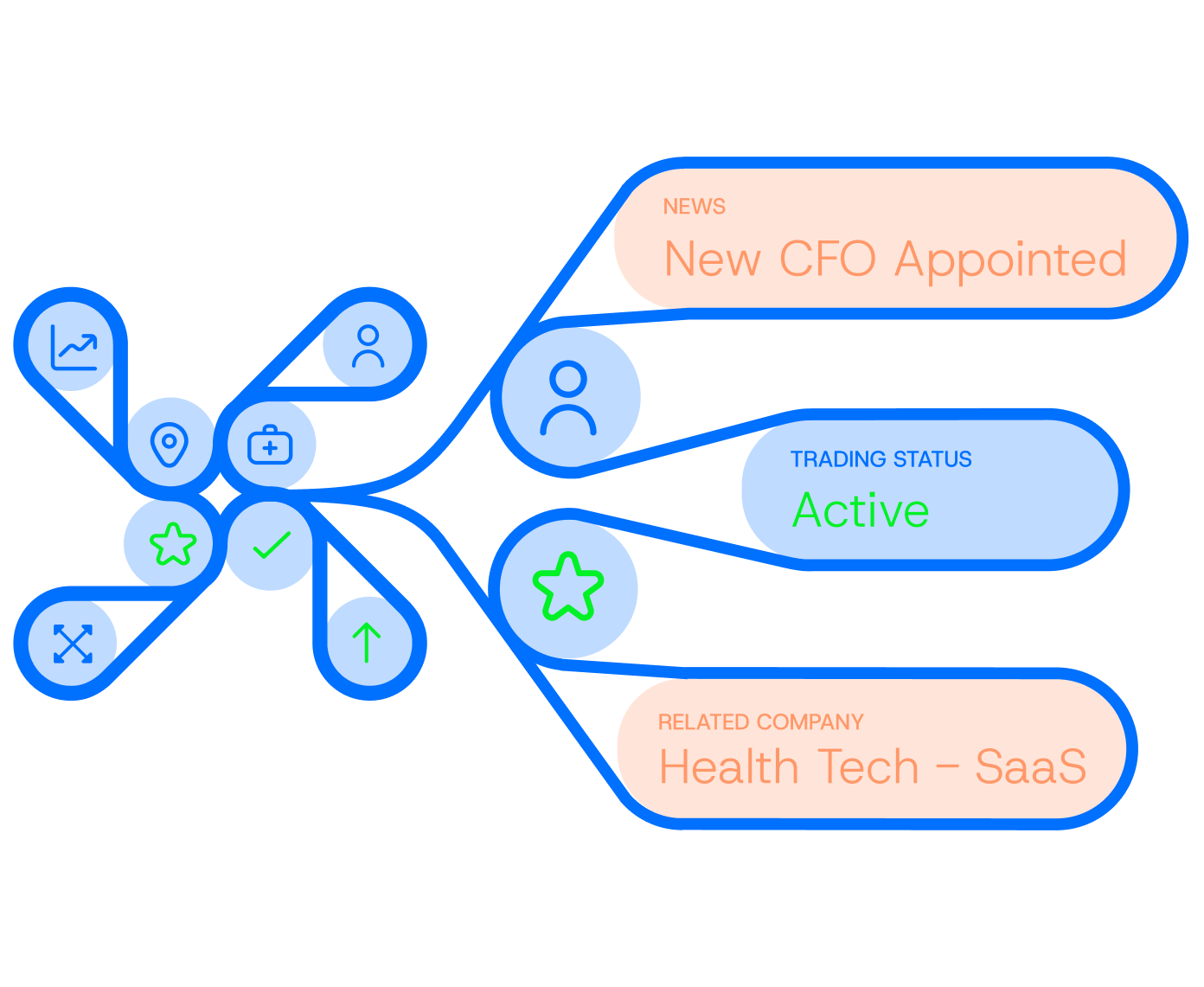

Connecting the unconnected in dealmaking

Unifying the data, intelligence, and signals that exist for private equity firms, and transforming it into deals.

Finally, a platform your firm can truly customize

Deal Engine is offered as a white-labelled solution, resulting in faster onboarding, adoption, brand alignment and organizational buy-in.

Find more net new deals for your firm

Codify your strategy, track the market and increase relevance. Deal Engine delivers always-on agentic AI intelligence that continuously monitors your entire investable universe — specified by your thesis — and surfaces high-fit targets.

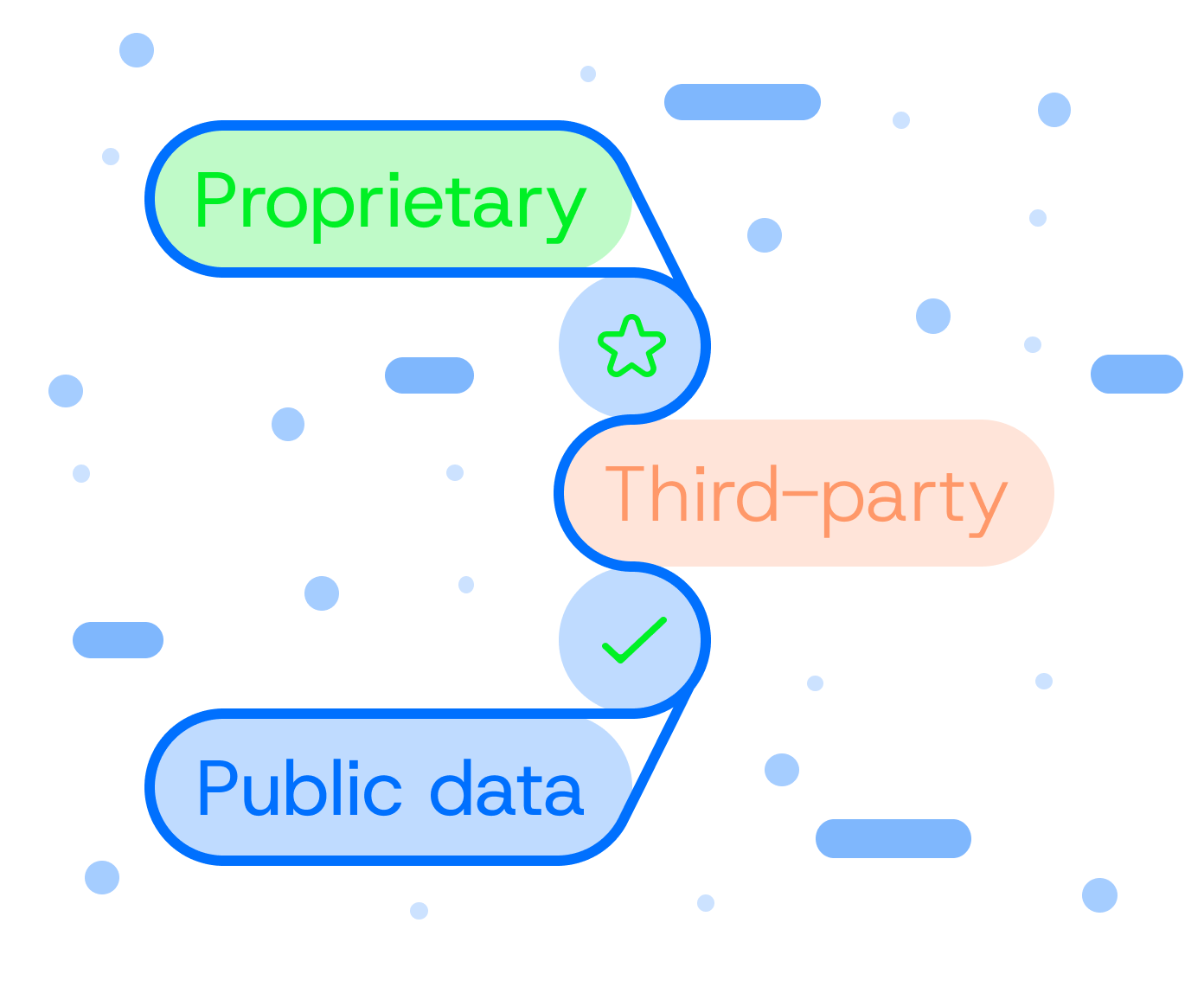

Unify data, unlock insights

White-label the technology and make your Deal Engine entirely yours. Deal Engine brings together proprietary, third-party, and public data into a single connected layer, transforming information into actionable intelligence.

Power up instead of piling on

Optimize your data spend and unlock the value in your CRM and internal documents. Deal Engine enriches your CRM with structured, intelligent data without adding clutter, complimenting your existing tech stack instead of complicating it.

Build the gen AI-enabled firm of tomorrow

Fuel your AI roadmap with an integrated agentic AI intelligence layer. Accumulate and train your firm’s own proprietary dealmaking engine, built to evolve with your strategy and scale firm-wide tech-readiness.

Enabling firms to create their own edge

45M+

datapoints accumulated in a typical deployment

161

actionable insights per firm per month, on average

64

new deals on the radar in 2 months, on average

99.5%

decreased analyst time spent on manual research

Intapp Amplify 2026: the new dealmaker's edge

What private markets leaders are learning about AI, data, and the next phase of dealmaking Deal Engine was proud to sponsor Intapp Amplify this year, with members of our team attending both the New York and London events. Across the two conferences, Alex Bajdechi, Steven Kolatac, Phil Westcott, Martin Pomeroy, Sven Hansen and Matthew Kordonowy joined discussions with private markets and technology leaders and partners about how CRM, data infrastructure and AI are evolving across the industry. This team has worked closely with firms running Intapp DealCloud for many years. That perspective made the conversations across Amplify particularly interesting, as the industry begins to move from experimenting with AI tools towards building the infrastructure that allows AI to operate effectively. A major focus of the event was Intapp Celeste, Intapp’s new agentic AI layer designed to coordinate AI agents across the private markets workflow. Celeste enables firms to move beyond humans manually operating software interfaces and towards a model where professionals instruct AI agents to complete tasks across their technology stack. In practice, this means agents can interact with systems such as Intapp DealCloud to monitor signals, capture opportunities and automate operational processes. In this best practice architecture, Deal Engine can provide the integrated market data to the DealCloud environment, the combination forming a firm-owned contextual layer to feed these agents. Across sessions and conversations with clients, partners and technology leaders, several consistent themes emerged. 1. AI advantage is shifting from models to context The advantage lies in the proprietary market context that those models interrogate. For private markets firms, that context includes their (i) procured market data, (ii) their market context scraping, (iii) their proprietary notes and insight stored in their DealCloud, (iv) their proprietary sourcing history and rationale, (v) their evolving investment thesis and (vi) internal institutional knowledge in their Document Management systems Many conversations centered on how firms can better integrate and govern this context so that AI can interrogate and drive agentic workflow in a meaningful way. 2.Infrastructure thinking is replacing point tools Another recurring discussion was the move away from adding more standalone tools. Over time many firms have accumulated complex technology stacks, with different systems managing research, deal flow, market data and internal processes. While each tool serves a purpose, the overall result can be fragmented workflows and disconnected data. The focus is now shifting towards infrastructure. Firms are thinking more about how their systems and data connect together to form a coherent operating environment that AI can work across. 3. Investment strategy needs to be codified Several conversations focused on how investment strategy is represented inside technology systems. Historically, investment theses have often existed as narrative documents or presentations. Increasingly, firms are looking at how those strategies can be translated into the signals and intelligence so that the Frontier AI technology can interpret the market and surface opportunity. When investment criteria, sector focus and deal characteristics are “bottled” into the intelligence infrastructure, of a firm, the trained agents can guide sourcing workflows, highlight relevant opportunities and support AI driven analysis. 4. Data governance is becoming central to AI strategy Data governance was another major theme across the event. Reliable AI outputs depend on reliable inputs. As firms introduce more AI driven capabilities, questions around data structure, permissions and oversight become increasingly important. Rather than being treated purely as an operational issue, governance and data architecture are becoming core parts of long term AI readiness. 5. AI is supplementing relationship driven deal origination There was strong agreement that AI will not replace the relationship driven nature of private markets. Instead, the focus is on supplementing existing sourcing approaches and finding the right balance. Relationship networks and inbound opportunities remain essential, but firms are increasingly augmenting these with thesis driven intelligence that can surface opportunities earlier and more consistently. The goal is not to remove the human element of dealmaking, but to give deal teams better context, more leverage and prioritize where they are spending their time. 6. The shift from operating software to directing AI systems One of the more forward looking themes discussed was how professionals may interact with software in the future. Rather than manually operating multiple applications, the emerging model is one where professionals increasingly instruct AI systems to carry out tasks across those tools on their behalf. Technologies such as Intapp Celeste reflect this direction of travel, where AI can coordinate activity across different applications while keeping the human in control of the outcome. For private markets firms, this could gradually reshape how research, origination and internal workflows are managed. The direction of travel for private markets technology Taken together, the conversations across the New York and London Amplify events pointed to a clear direction of travel. Frontier AI is advancing quickly, but the firms seeing the most value are those focusing on the foundations. Integrating data, structuring institutional knowledge and ensuring governance across systems are becoming critical steps in making AI useful in practice. For private markets firms, the most valuable assets remain their people and the knowledge they accumulate over years of investing. When that knowledge is coalesced within a well designed architecture, the organization stands to gain an exponential benefit in future years. Thank you to the Intapp team for hosting two fantastic market events! The conversations at Amplify made one thing clear: firms that structure and integrate their market intelligence today will be best positioned to unlock AI tomorrow. Speak with our team to learn more.

How GPs can future-proof their AI roadmap

As Frontier AI accelerates, contextual infrastructure is becoming the defining competitive layer Produced in association with Real Deals, and published first on their website: https://realdeals.eu.com/article/how-gps-can-future-proof-their-ai-roadmap Private equity is entering a phase defined by intensifying competition for differentiated deal flow, compressed timelines and sharper LP scrutiny of sourcing discipline. At the same time, rapid advances in frontier AI models represent an opportunity to transform how GPs source the best opportunities, track the market, build conviction and prioritise deal team attention. The leading firms have moved beyond experimentation, building contextual infrastructure that connects market data, document history, relationship intelligence and thesis criteria into a single, governed view of their pipe. As noted in the recent Bain & Company Global Private Equity Report 2026, investment frameworks increasingly start with encoding “what makes us special” directly into the sourcing process. Frontier AI now allows firms to codify their ‘secret sauce’ and train agents for 24-7 coverage of the market. Where AI advantage really sits Frontier AI models (Large Language Models or LLMs) are evolving rapidly, with pattern recognition, synthesis of complex research and signal extraction becoming increasingly performant. The differentiator will be the architecture that those LLMs are pointed at. Without unified, governed data foundations, AI output remains siloed, reflecting a single perspective rather than a firm-wide view. With contextual infrastructure, it produces a truly integrated analysis of everything the firm knows about a target. By building up the institutional knowledge in this contextual layer, a firm’s IP will accumulate - regardless of personnel changes - and will compound over time. This is where the AI advantage really sits. The infrastructure puzzle Deal teams today operate across an expanding ecosystem of tools capturing relationship data, market intelligence and deal documentation. Each system provides insight, but those insights often remain siloed, forcing teams to reconcile fragments rather than interpret a unified picture. Effective deal-making depends on joining signals as they emerge. For example, indicators of momentum, risk and urgency can sit across different systems and evolve at different speeds. Without a shared contextual layer to connect them, interpretation becomes manual and episodic, forcing conviction to be rebuilt repeatedly. As a result, leading firms are shifting from adding tools to building data engines. Connecting the dots A market data engine represents a unified, firm-owned contextual architecture that aligns market signals, CRM history, document repositories and the proprietary investment thesis, all assimilated in one governed environment. This does two things simultaneously. First, it aligns the institutional knowledge on the market. Historical outcomes, relationship data and sector signals sit in continuous dialogue rather than in separate silos. Second, it transforms the role of AI. Instead of querying disconnected systems, LLMs can operate within a structured context. Firms can monitor every target in their Total Investable Universe - akatop of funnel – plus all the other market participants - the bankers, the LPs, the partners - that drive dealmaking. By codifying their thesis, they will surface patterns consistent with prior successful deployments. They can also flag risk deviations earlier in the cycle. In effect, AI becomes an amplifier of institutional memory, allowing firms to compound what they already know as models improve. Distinguishing true strategic fit from noise In active markets, activity can be misleading. Spikes in engagement or sector enthusiasm do not necessarily reflect strategic alignment, particularly without historical reference points. Contextual infrastructure embeds live activity within structured reference: prior deal performance, sector-specific metrics, competitive behaviour and relationship history. Momentum is evaluated against pattern recognition grounded in the firm’s own outcomes. Models trained on proprietary, governed datasets can detect nuanced alignment signals that would be invisible in fragmented systems. They assess whether current signals align with the firm’s definition of a strong investment opportunity. Many firms are currently piloting AI in narrow workflows, for instance, drafting investment memos, summarising CIMs or accelerating early-stage screening ahead of IC. These applications deliver incremental efficiency. But the larger opportunity lies upstream — in strengthening prioritisation, resource allocation and conviction formation. When relationships between deal activity, market shifts, CRM history and thesis indicators are visible early, teams focus on fewer opportunities with greater intent. Marginal prospects are deprioritised sooner. Engagement reflects conviction rather than optionality. This is where an organisation's architecture choice today can future-proof the firm. As models improve, they will require deeper integration into core decision-making processes. Firms that have already unified their data layers will be able to deploy increasingly advanced capabilities without re-engineering foundations. Those that have not will face a structural bottleneck: powerful models sitting atop fragmented inputs. Future-proofing AI strategies The next phase of AI adoption in private equity is likely to centre on integration across core workflows. Contextual infrastructure provides that foundation, anchoring interpretation in a firm-specific thesis rather than generic market signals. In doing so, it separates a firm’s long-term competitive advantage from the pace of external technological change – as models advance, the firm can advance with them. For some GPs, building contextual intelligence infrastructure still feels like a forward-looking innovation project. Increasingly, it is becoming a strategic requirement. The firms that thrive in the next cycle will act earlier, prioritise more selectively and engage with clearer positioning, shaping processes before competitors do. As AI capabilities continue to advance, access to models alone will offer diminishing differentiation. What will matter is whether a firm’s data architecture allows AI to reinforce institutional judgement rather than dilute it. In competitive markets, coherence compounds, and LPs are more likely to back GPs that demonstrate discipline in their data and AI foundations. For GPs seeking sharper conviction and faster deal execution, building a market data engine is increasingly about how to transform fragmented information into sustained conviction as AI becomes embedded across origination and decision-making. Find out more about how Deal Engine helps dealmakers.

What mid market private equity leaders are getting right about AI

WATCH: From AI experimentation to institutional advantage in mid-market private equity Artificial intelligence is no longer a theoretical discussion in private equity. Foundational models are advancing quickly, AI capabilities are being embedded into core systems, and firms are under increasing pressure from LPs to demonstrate that they are building modern, technology-enabled origination capabilities. At the same time, many mid-market firms are discovering that layering AI onto existing systems does not automatically translate into better deal flow, stronger prioritization, or faster decision-making. The difference between firms seeing measurable impact and those still experimenting often has less to do with model sophistication and more to do with something less visible: whether their underlying data environment has the context to accurately reflect their investment strategy. In two recent conversations (videos below), Alex Bajdechi and Phil Westcott shared what they are observing across the market and why the next phase of competitive advantage in private equity will be shaped more by data architecture than by model access alone. The operational gap between AI ambition and reality {% video_player "embed_player" overrideable=False, type='hsvideo2', hide_playlist=True, viral_sharing=False, embed_button=False, autoplay=False, hidden_controls=False, loop=False, muted=False, full_width=False, width='1280', height='720', player_id='374367046844', style='' %} Deal Engine Global VP of Sales, Alex Bajdechi, talking about PE firms and what they need to win in such a tech-enabled world getting to grips with AI, tools, data, context integration and more. Most mid-market private equity firms already operate with a substantial technology stack. They license third-party datasets, maintain a CRM, track opportunities, and build target company lists. On paper, the components required for AI-enabled origination appear to be in place. In practice, the operating reality inside deal teams is often more fragmented. Alex Bajdechi, Global VP of Sales at Deal Engine, has spent years working closely with private equity firms navigating CRM strategy and origination workflows. A consistent pattern emerges: investment thinking and investment data are rarely tightly aligned. Sector theses are often articulated in slide decks. Investment criteria live in narrative documents. Historical deal knowledge sits in free-text CRM notes. Market intelligence resides in external platforms that are not fully integrated. The firm’s edge exists, but it is distributed and inconsistently structured. When AI is introduced into this environment, expectations are understandably high. However, if the underlying data is incomplete, inconsistent, or disconnected, AI outputs will inevitably reflect those constraints. Teams begin to question reliability. Results require manual validation. Momentum slows. This dynamic explains why many firms remain stuck in reactive patterns. They respond to inbound opportunities rather than systematically hunting against defined criteria. They move across disconnected applications that do not meaningfully communicate. They build lists that loosely reference strategy but are not rigorously anchored to it. They experiment with AI tools without seeing durable change in workflow. These outcomes are not a failure of AI technology. They are a reflection of the fact that AI systems can only reason over the structure and context they are given. Firms beginning to see consistent impact are addressing the issue at a foundational level. Rather than prioritizing additional tools, they are translating their investment strategy into structured data. They are aligning CRM history, company tracking, market intelligence, and proprietary insights into a unified operating layer that mirrors how the firm evaluates opportunity. For many mid-market funds, this does not require rebuilding from scratch. A significant proportion of the relevant data already exists internally. The shift lies in organizing and governing that data so it becomes coherent, auditable, and directly aligned to strategy. When that alignment is in place, AI becomes less experimental and more operational. Turning implicit judgment into structured institutional intelligence {% video_player "embed_player" overrideable=False, type='hsvideo2', hide_playlist=True, viral_sharing=False, embed_button=False, autoplay=False, hidden_controls=False, loop=False, muted=False, full_width=False, width='1920', height='1080', player_id='374367046846', style='' %} Deal Engine Founder and CEO Phil Westcott, talking about how, a strategic level, the challenge extends beyond integration. Every private equity firm believes it has differentiated insight. Partners develop pattern recognition through years of transactions. Teams form strong views on which business models scale, which management dynamics matter, and which signals are predictive within a given sector. The challenge is that this knowledge is often implicit. It lives in individuals’ experience, fragmented notes, or loosely structured CRM entries. It influences decisions, but it is not consistently encoded. Phil Westcott’s perspective is that if firms want AI to meaningfully enhance investment performance, this implicit knowledge must be structured and institutionalized. That process involves converting narrative theses into defined, measurable criteria. It requires mapping historical deal outcomes to structured attributes so patterns can be analyzed systematically. It means integrating CRM history, documents, and external data into a governed environment that reflects how the firm actually evaluates investments. When this work is done, AI is no longer reasoning over generic market information. It is operating within firm-specific context. Outputs become more aligned with how the partnership thinks. Insights can be traced back to defined inputs. Over time, institutional memory compounds rather than dissipates. This is the logic behind building a dedicated data engine. Not as another point solution competing for attention, but as connective infrastructure that unifies proprietary and external data into a coherent foundation. In that environment, AI becomes materially more useful because it is grounded in structured context rather than isolated datasets. From reactive deal flow to systematic signal generation One of the most practical consequences of strengthening the data foundation is a shift in how origination is executed. Relationship-driven sourcing will always remain central to private equity. However, competition for differentiated opportunities has intensified, and LPs increasingly expect firms to demonstrate discipline in how they source and prioritize investments. When investment criteria are codified and data is unified, firms can move beyond reactive workflows toward systematic signal generation. Rather than waiting for opportunities to surface, they can continuously monitor companies that match structured criteria. They can identify changes in performance, ownership, hiring patterns, or strategic direction that align with their thesis. They can prioritize outreach based on contextual triggers instead of static lists. This approach does not replace human judgment. It sharpens it. Analysts spend less time reconciling data across systems. Associates evaluate opportunities against clearly defined attributes. Partners gain visibility into how pipeline development aligns with stated strategy. AI outputs become more reliable because they are grounded in transparent inputs. Over time, origination becomes more repeatable. Institutional knowledge becomes embedded in infrastructure rather than residing solely in individuals. The firm’s AI narrative with LPs is supported by demonstrable architecture rather than isolated experiments. Building long-term AI advantage on durable foundations It is tempting to assume that competitive advantage in AI will be determined by access to the most advanced models. In reality, foundational model capabilities are improving across the industry, and access is becoming increasingly standardized. The more durable source of differentiation lies in proprietary context and disciplined data architecture. Mid-market firms that layer AI onto fragmented systems may achieve incremental improvements, but they are unlikely to unlock sustained advantage. Firms that invest in structuring and governing their data environment are building infrastructure that compounds over time. The practical question is not simply whether a firm is using AI. It is whether its data foundation accurately represents how it invests, how it sources, and how it creates value. For firms willing to address that foundation directly, AI becomes more than an overlay. It becomes an embedded extension of the firm’s strategy, integrated into everyday workflows and capable of scaling institutional intelligence. That is where enduring competitive advantage begins. Find out more about how Deal Engine helps dealmakers.

Why context engineering matters in private equity

By Martin Pomeroy, Tech Co-Founder, Deal Engine Across private equity, firms are racing to deploy large language models against their data ecosystems. CRMs, data rooms, portfolio data, third-party datasets; everything is being fed into LLMs with the hope that insight will emerge on the other side. In many cases, it doesn’t. Or worse, the output looks plausible but doesn’t meaningfully improve decision-making. The result is frustration, skepticism, and an unintended outcome: doubt about whether AI is actually fit for purpose at the firm level. The problem isn’t the models themselves, it’s the lack of context. The LLM isn’t the starting point One of the most common mistakes we see is treating the LLM as the first step in the process rather than the last. Firms ask, “What can this model tell us?” before asking the more important question: What data should it be looking at in the first place? Without disciplined pre-processing, even the best models will struggle. They’ll attempt to reconcile stale information with current signals, weigh irrelevant data alongside high-confidence inputs, and surface results that feel noisy or contradictory. That’s not an intelligence failure, it’s a systems failure. The right approach is to be deliberate about what data is passed to the model, how it’s framed, and what should be excluded entirely. This is where context engineering becomes critical, and where partners like Deal Engine play a role in helping firms operationalize it correctly. Start with the investment thesis Context engineering begins with clarity around the investment thesis. An LLM should not be searching “the universe of companies.” It should be searching your universe - defined by sector focus, size, geography, ownership structure, growth profile, and other thesis-driven constraints. That thesis provides the lens through which everything else is evaluated. Once that lens is defined, firms can establish a manual data priority system: agreed-upon rules that determine which data sources carry the most weight, which signals are disqualifying, and how classifiers and scoring models should behave. An agent without memory and context is just a text generator - this structure allows AI to operate with intent rather than ambiguity. Encoding institutional memory Another overlooked aspect of context is institutional knowledge. If a company has already been reviewed and marked non-investable in the CRM, that matters. If it was evaluated recently, resurfacing it again adds little value. Context engineering encodes these realities directly into the workflow: don’t show me what we’ve already seen, don’t revisit decisions without new signal, respect prior conclusions unless conditions change. Without this layer, LLMs will happily rediscover the same companies over and over again, technically “new,” but operationally useless. Rubbish in, rubbish out…at the token level All of this comes back to a simple principle: rubbish in, rubbish out. LLMs reason over tokens. If you overload them with loosely relevant data, conflicting timeframes, or redundant information, you increase the likelihood of confusion. Controlling tokens isn’t just about efficiency or cost, it’s about analytical clarity. Context engineering forces discipline. It ensures that only the most relevant, highest-confidence information is passed to the model, in a structure it can reason over effectively. The goal is not more data, but better data. Practical use cases: from noise to signal One practical example is generating LLM-friendly company profiles. Instead of asking a model to synthesize raw data from dozens of sources, firms can provide a normalized, thesis-aligned profile that captures what actually matters for an initial investment decision. This is especially important when searching for net-new opportunities. Deal sourcing is inherently a haystack problem - but without context, an LLM will surface a lot of useless needles. Context engineering dramatically narrows the search space so that “net new” actually means new and relevant. Predictability that enables trust and accelerates user adoption When context is properly engineered, AI becomes predictable. The same inputs produce consistent outputs. The process can be repeated, audited, and trusted. Ultimately, that’s what deal teams want: all the relevant information at a glance, presented clearly enough to make a confident yes-or-no decision. Not a black box, not a novelty, but a reliable system. The future of AI in private equity won’t be defined by who uses the biggest model. It will be defined by who asks and answers the right question first: what data are we passing to the model?To learn more about how Deal Engine has consulted firms on this very conundrum for over a decade, get in touch. Get your demo now to see how this works in practice.

Be first to every deal.

See Deal Engine in action.

Discover how Deal Engine is providing private equity firms with the data engineering and AI capabilities fueling their competitive advantage.